This is a personal project that I conducted during the final two years of my bachelor studies which was supervised by Asim Evren Yantac. Emotionscapes has three legs; emotion maps, emotionscape installation and mediated emotionscape for augmented reality applications. The first two can be seen as exploratory studies of the latter. The aim of the whole project is to understand emotions, and look for answers to the question of 'how can we design for emotional communication?'

Throughout this project, I conducted ethnographic research and analysis; developed the concepts; watercolour painted the maps, prototyped the installation and with my peer Sinem Semsioglu we developed the first prototype of augmented reality application of Emotionscape with Hololens.

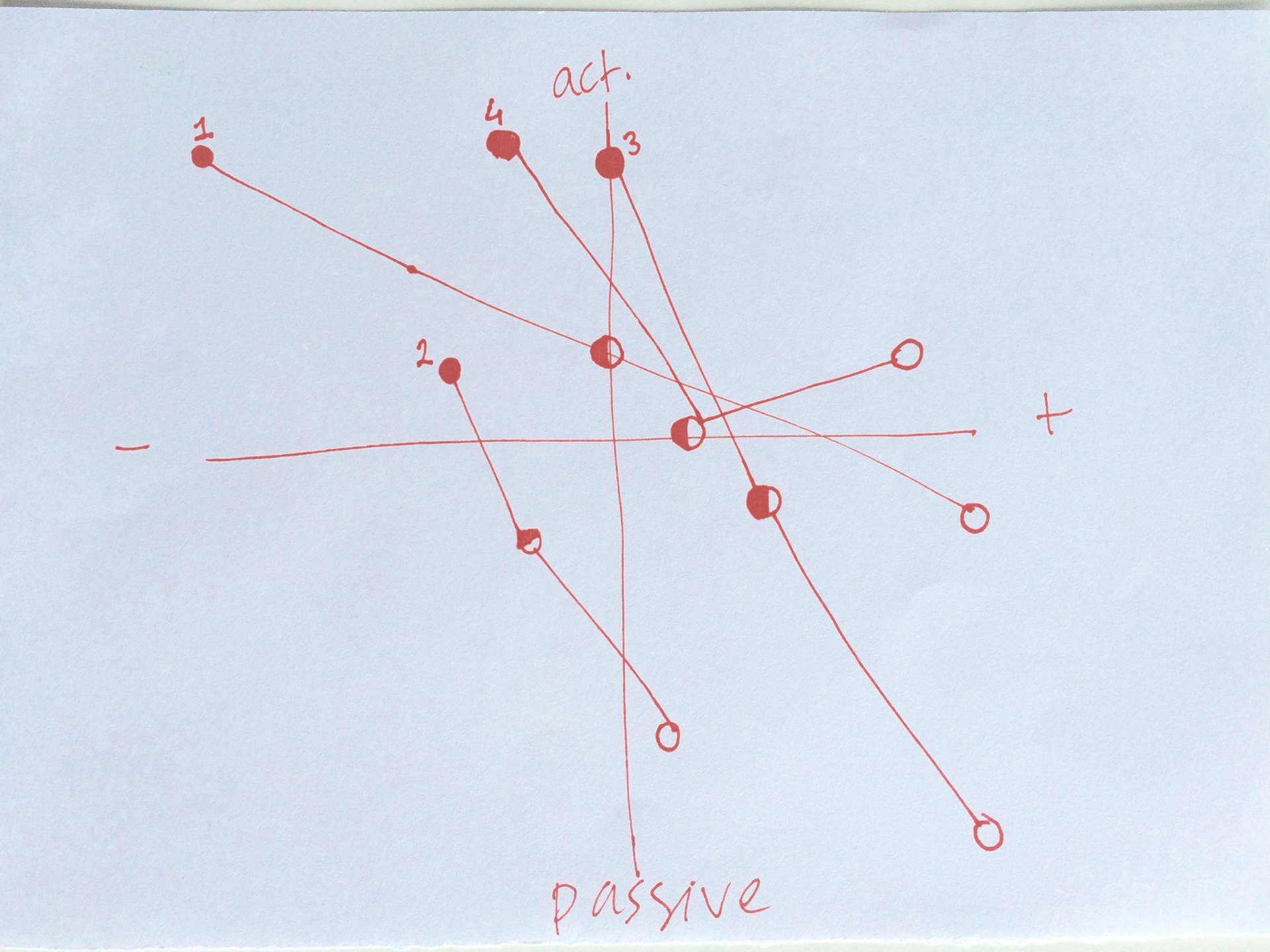

A small representation of how the project made me feel like

1. Emotion Maps

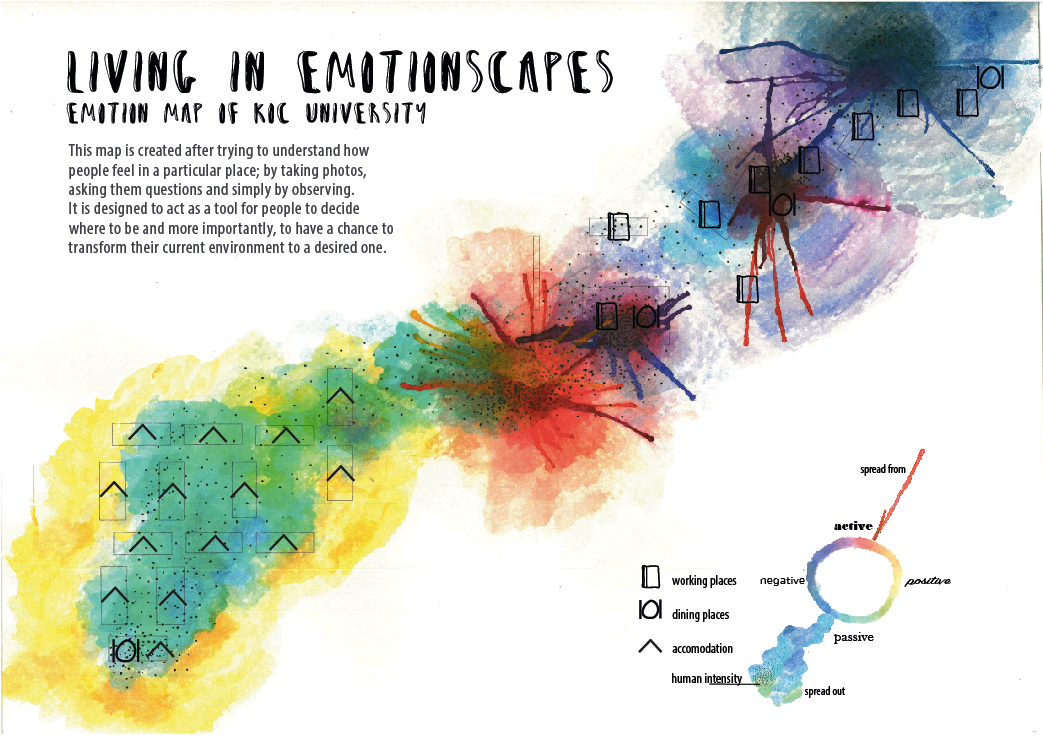

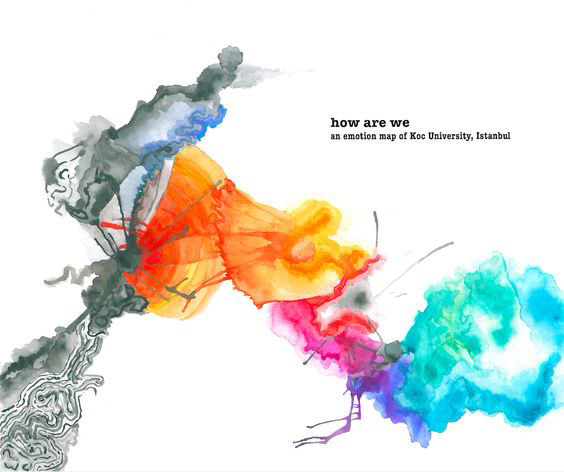

Emotion map of Koc University, Istanbul

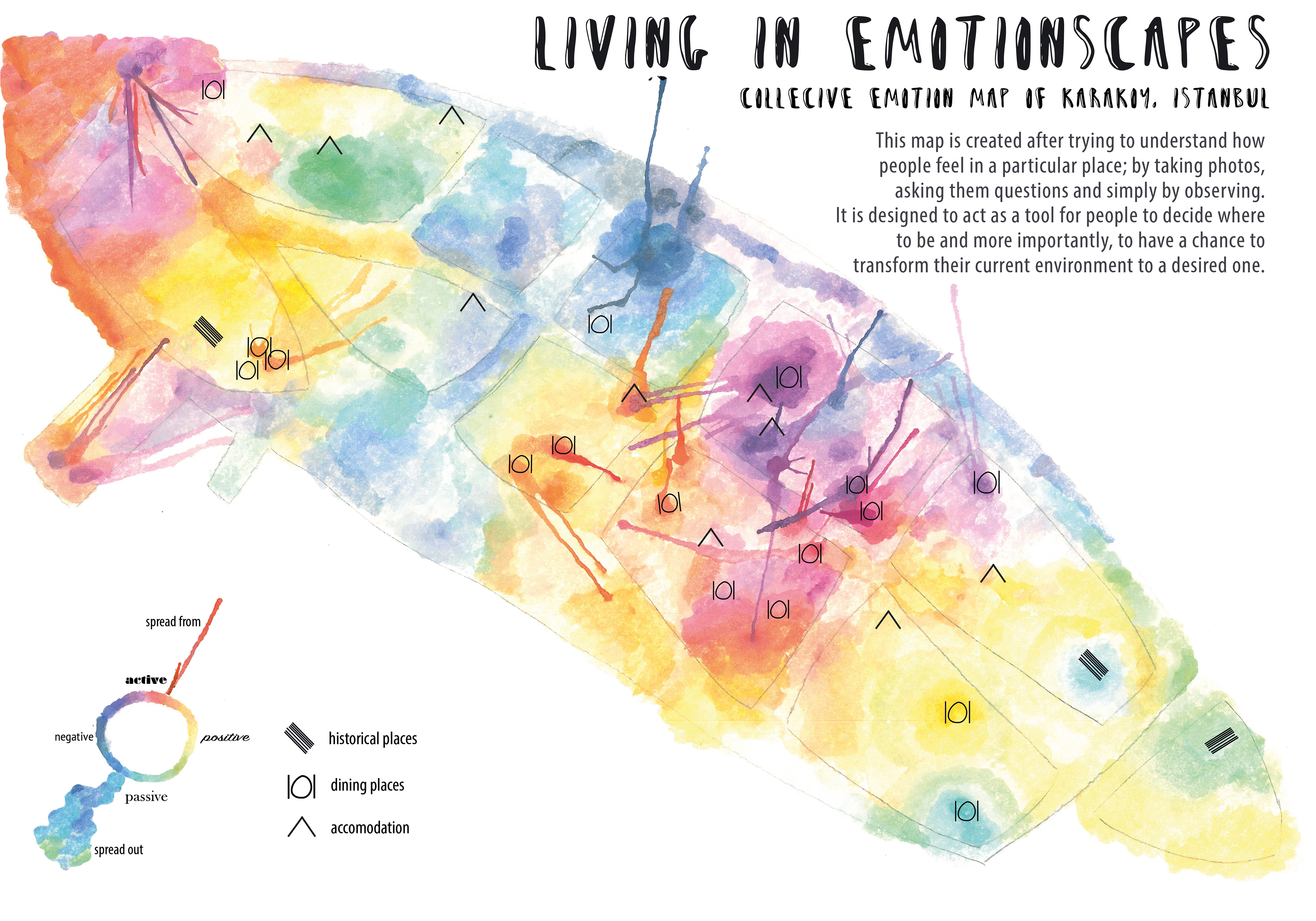

Emotion map of Karakoy, Istanbul

Emotions are one of the key factors, which shape our relationships with ourselves, other individuals around us and even with lifeless materials. By transferring emotions from intangible to tangible, it becomes easier to name places, which are “happy” or “depressed”,that gives people a chance to understand what they are feeling and where they are feeling. The maps are created with watercolor and the emotion colors are based on the valence-arousal emotion wheel.

Research & Ideation

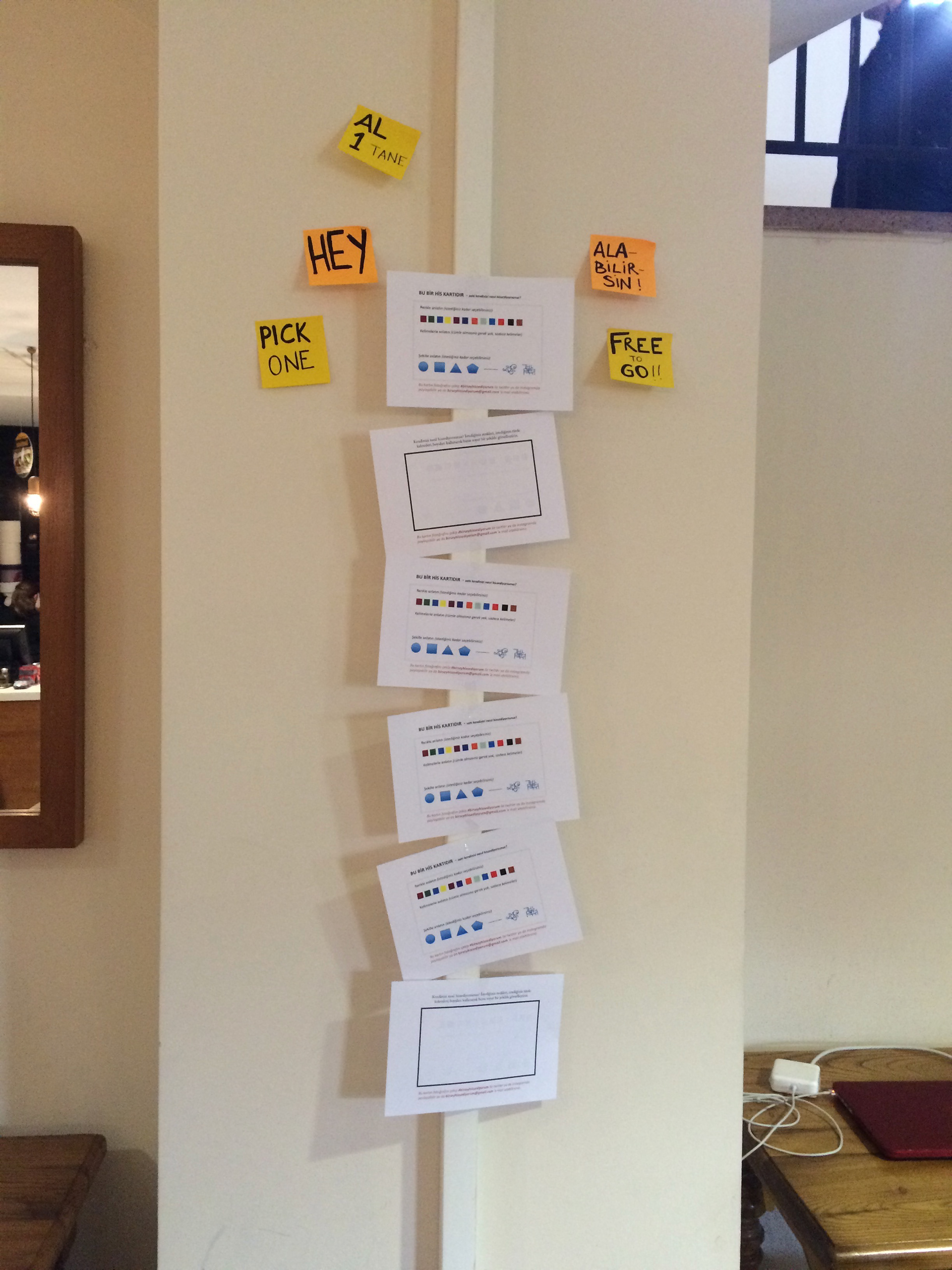

emotion probes wall

brainstorming

emotion map trial

example of an emotion probe

example of an emotion probe2

example of an emotion probe3

The data collection process for Karakoy was based on observations; whereas for Koc University, I collected data through emotion probes and a quantitative survey for Koc University. For Karakoy, I only aimed to understand how people feel in which district/neighborhood whereas for Koc University, I aimed both to understand how people associate their own emotion with visual attributes and also how they feel in a particular place in the university. Based on the analysis, I realised that people express calm and positive emotions with smooth and curvy shapes whereas they use sharp and edgy shapes to express negative and aggressive emotions. I translated these insights into watercolour attributes as "spread out" and spreading from" respectively. The sharp spreading out attribute of colors on the maps represent the high valence areas whereas for the subtle colorings, the emotions were relatively passive and stable.

2. Emotionscape Installation

bottom layer of Emotionscape

while expressing inner emotions

while shaping society's effect on his emotions

presenting Emotionscapes

This interactive tool provides a real-time emotion visualization experience for the user in a location-based manner. From the top, light is projected. In the first (upper) layer, by using colors, the user visualizes her inner emotions on the water. The reflection of the colors falls on the second (bottom) layer in which the user interacts with the dough to shape how the physical environment (society, buildings, anything that surrounds her) affects her emotions. I chose to work with marbling since it can stay on top of the water without disappearing and it suits with the nature of emotions -fluid and influences how we perceive the outer world. I worked with dough/clay as a representative of outside world and let the user interact directly with their hands to shape it since physical world is touchable, influences us and the installation creates a moment to express it.

3. Mediated Emotionscape

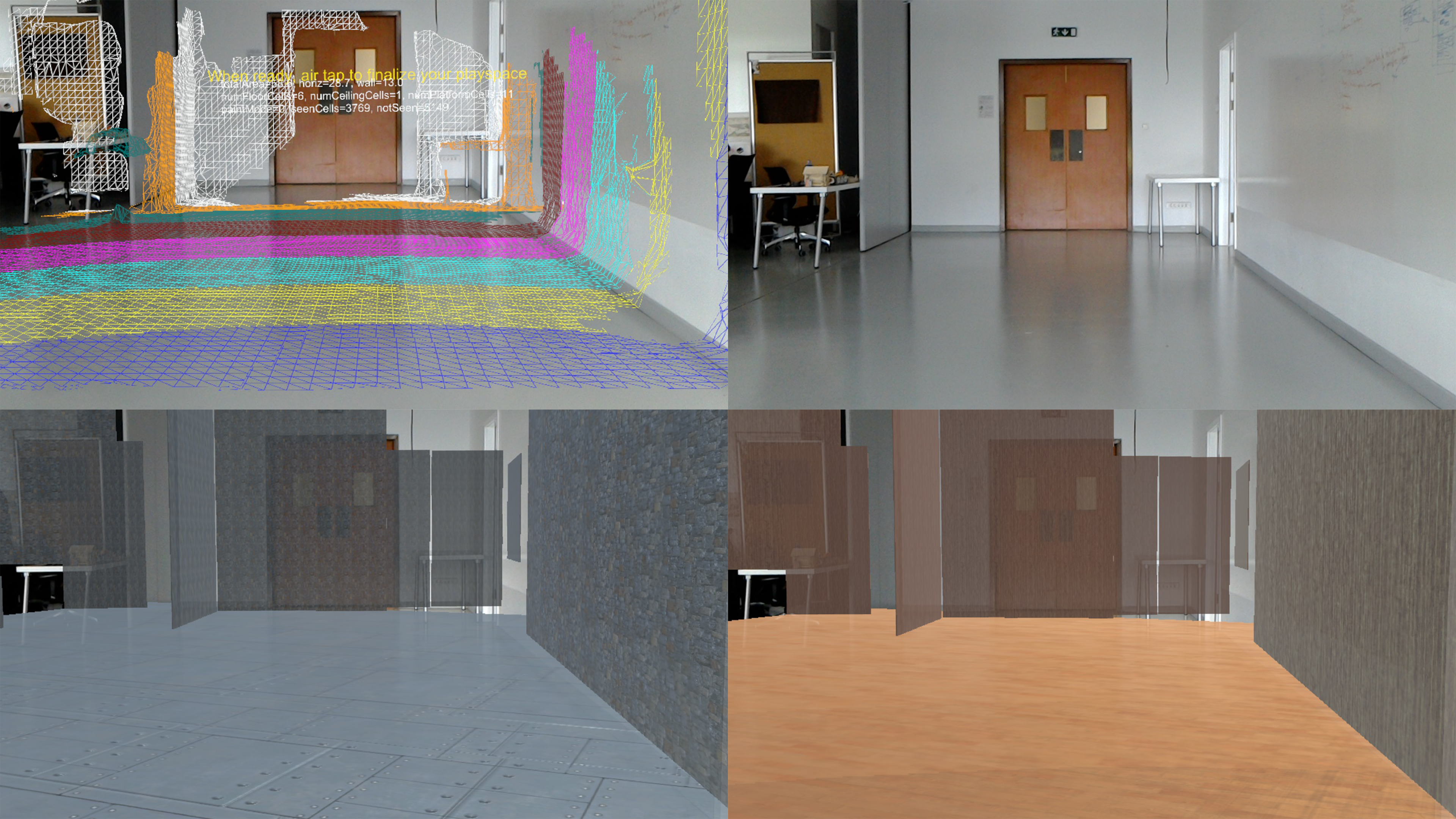

After identifying the possible representations of emotions both in 2D and 3D, the question of “how would it look like if we want to see emotions in real world?” occured. To explore this, we did a study and further developed the idea in an augmented reality device, Hololens. We aimed to speculate and explore how emotional awareness and communication can be enhanced through mediation of spatial experience. We shared our insights about the methodology for a speculative system and potential use cases and implications of an emotionally responsive space with a short paper and a poster in the conference VRST 2017 and CHI 2018 respectively.

Final Design

three modes concept description

emotion representations

spatial attribute changes

example of an emotional representation

example of an emotional representation

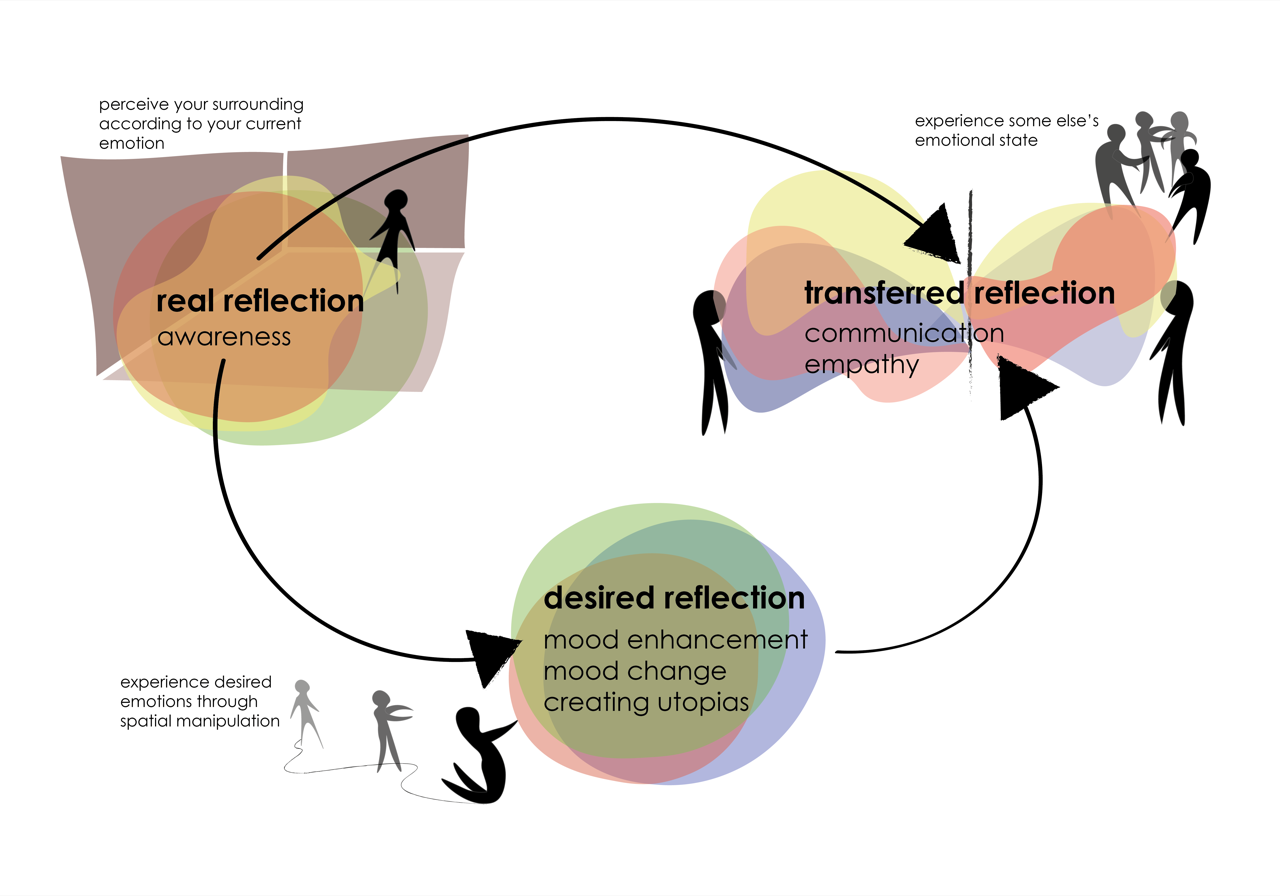

Based on an exploratory user research, we designed and prototyped a conceptual system that mediates the spatial attributes of the surroundings according to user’s choices. The suggested system has 3 modes: (1) Real reflection where user experiences their real-time emotional state with mediated spatial attributes; (2) transferred refection where a collocated person’s or group of people’s overall emotional states are visible and (3) desired reflection where the user experiences any emotion they wish.

Exploratory Research: Data Collection & Analysis -iterate-

Instagram study invitation

Instagram account screenshot

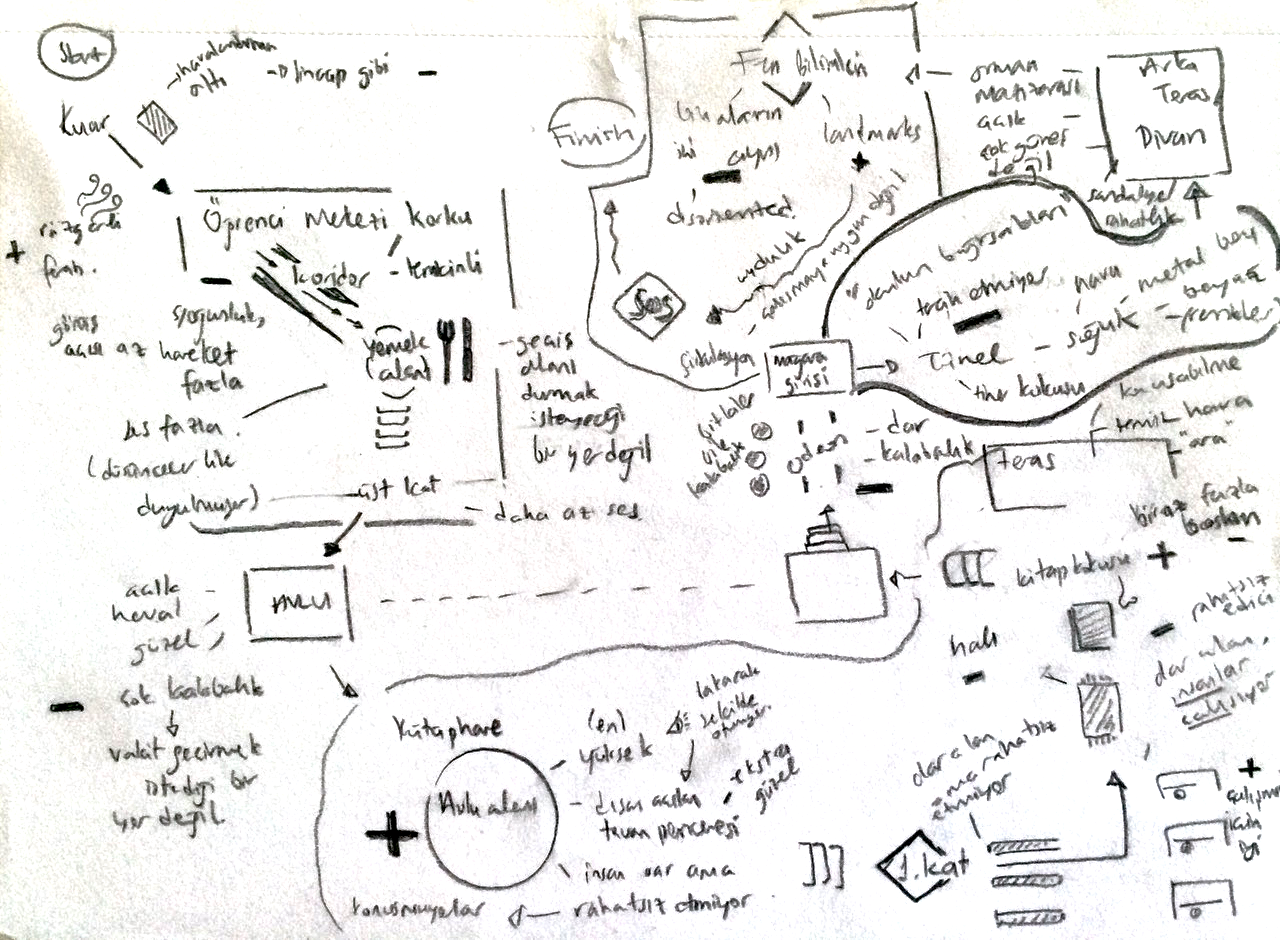

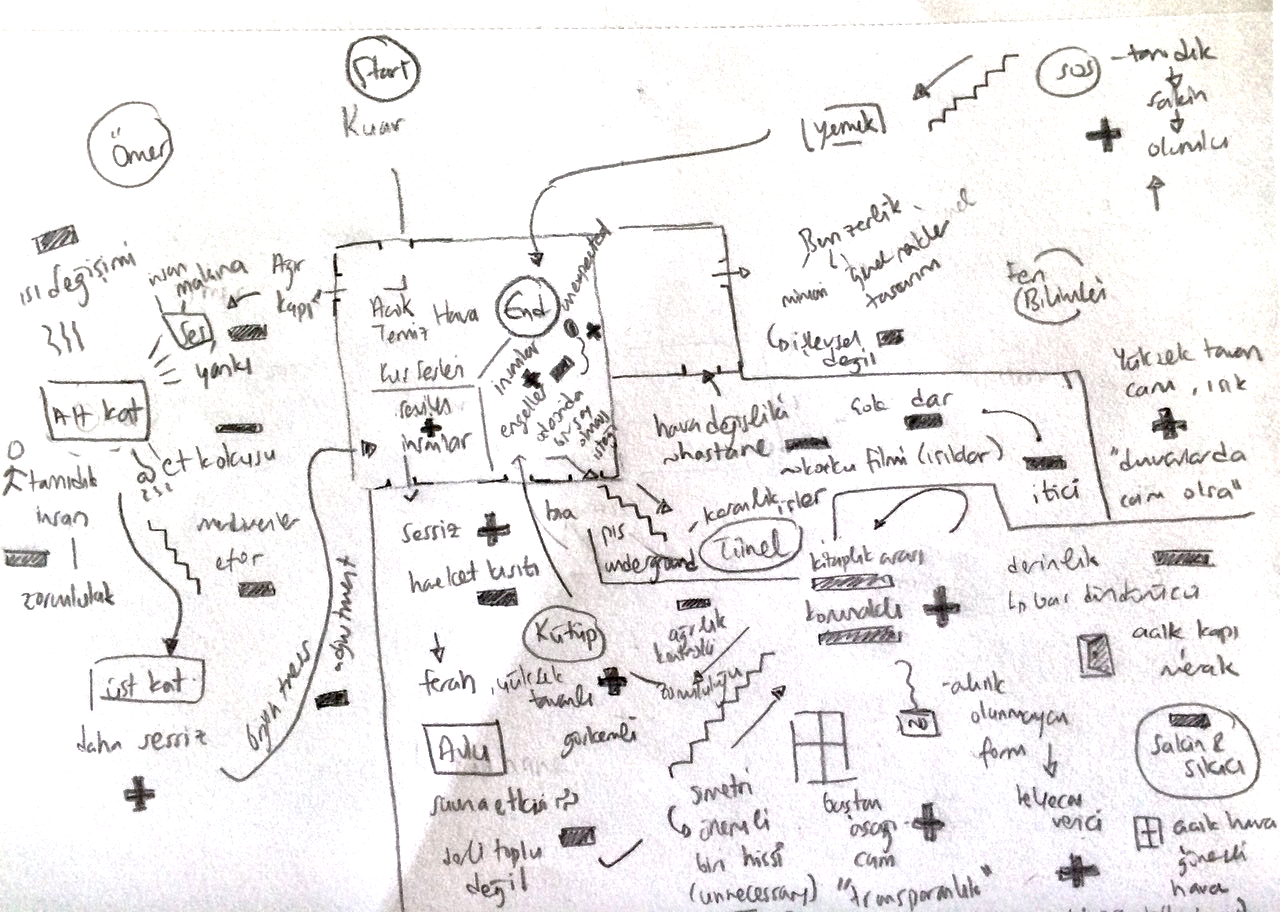

Emotion walk mapping

Emotion walk mapping

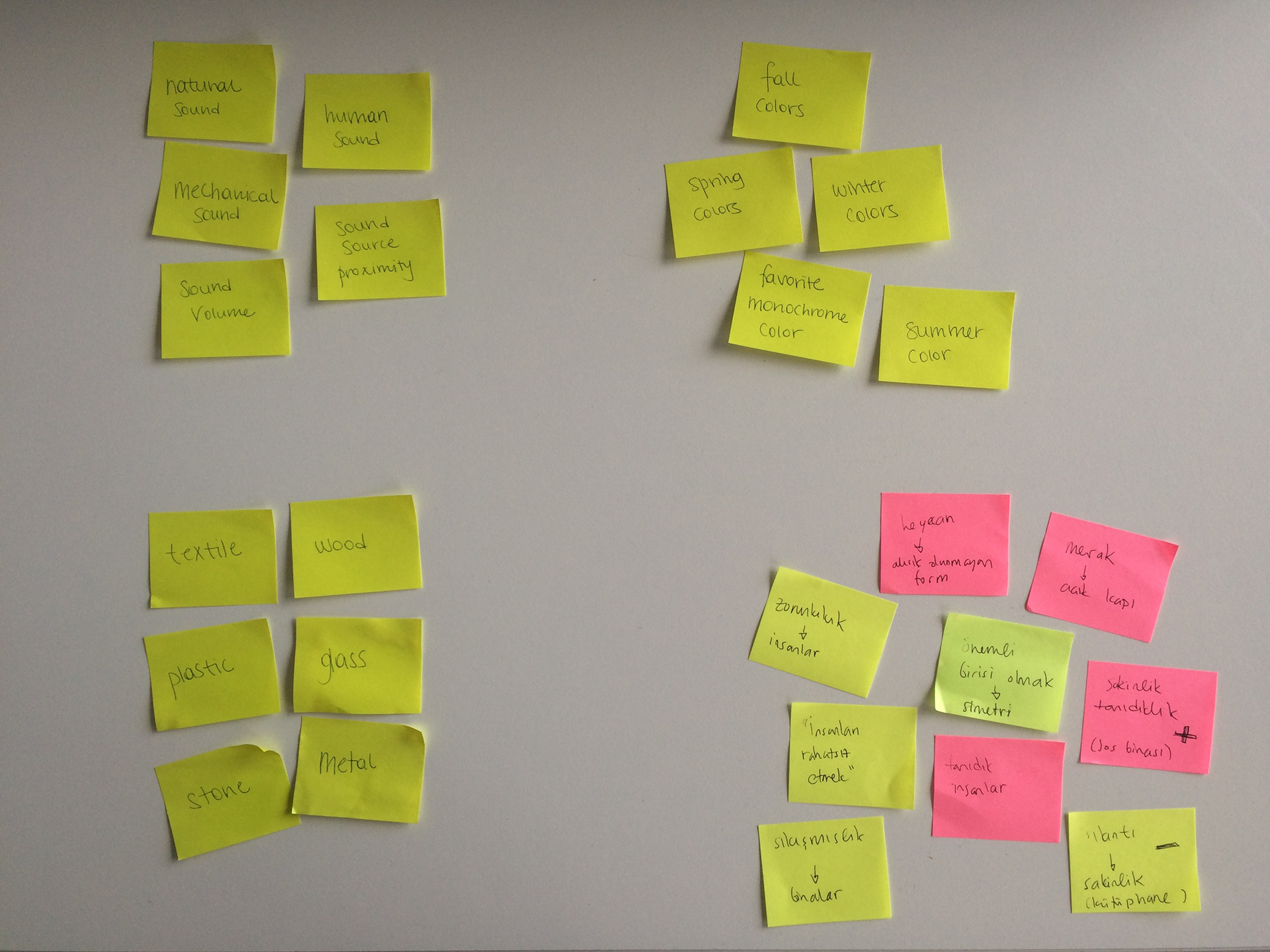

Instagram study + emotion walk analysis

Affinity diagram

Chosen attributes

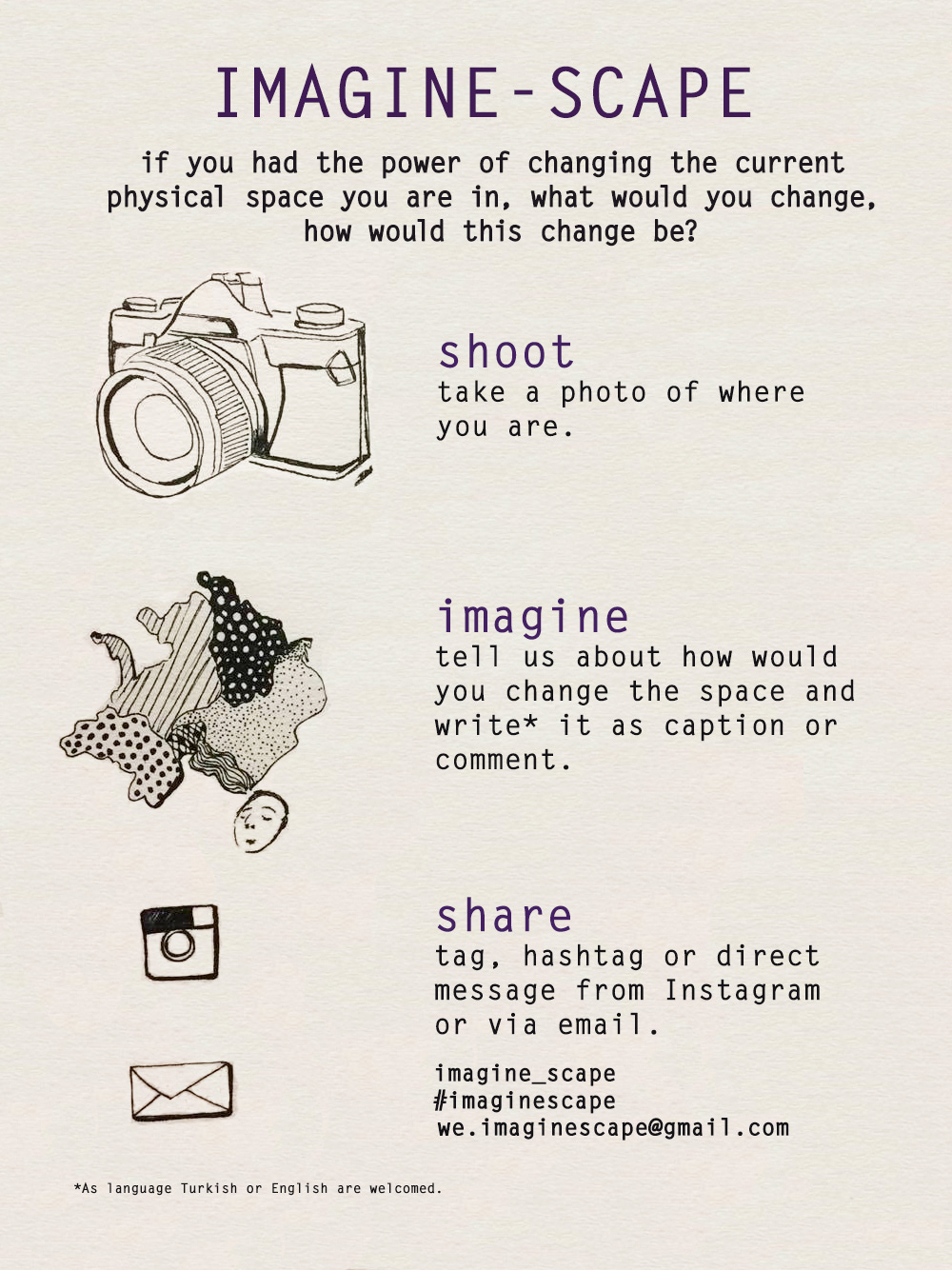

We conducted two exploratory design research activities simultaneously in order to understand environmental physical attributes that affect perceived emotion of an individual based on self reporting. First, we announced an Instagram study through our personal social media channels and physical posters around the university campus. The study asked participants to take a photo of their surroundings and write a caption about the things they wanted to appreciate or change in that particular environment and post it by tagging imagine_scape account. With the participants who are willing to attend for another round of study- emotion walks are conducted. These walks followed think aloud procedures by constantly commenting around the questions of "how this environment make you feel?", "why?", "how does it different from the previous experience?" and so on. Emotion walks aimed at collecting in-debt data about attributes and corresponding emotions. Later we analysed data on the walls by clustering declared physical attribute types and emotions associated with them. However, due to contextual differences (state of mind, personal preferences/associations) it wasn't possible to find concrete link between a particular physical attribute and its corresponding emotion. Thus from this study we only retrieved the physical attribute types that we are going to investigate further such as material type (categorical) or sound volume (scalable).

Data collection with Unity

Scalable emotion-attribute mapping

Categorical emotion-attribute mapping

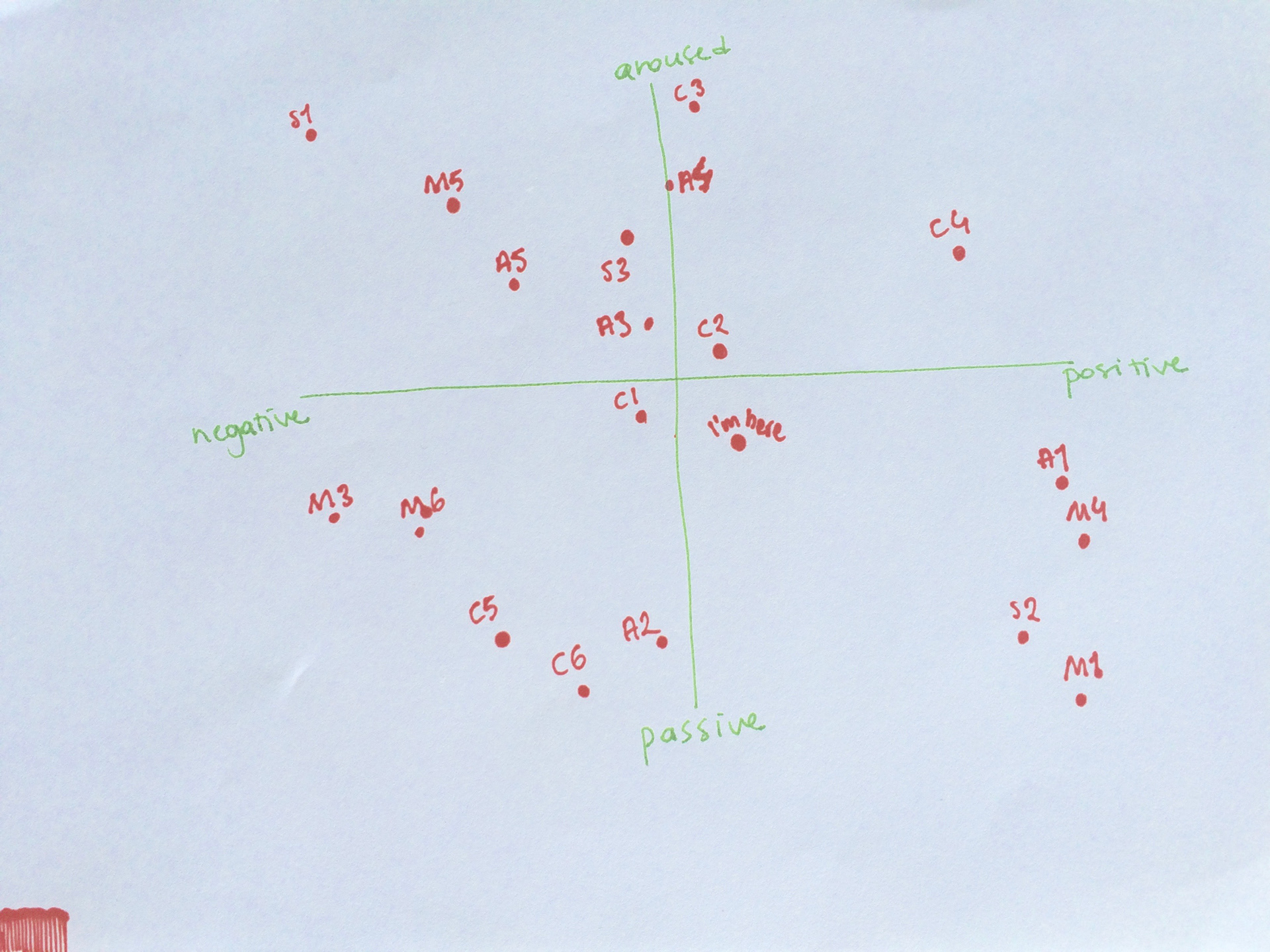

In order to generalise physical attributes' effect on emotions- we conducted a generative session with 15 participants. In order to minimise contextual differences, an everyday life scenario is presented to participants (starting a productive day without feeling anything unexpected). Later we asked participants to map out how scalable and categorical attributes would make them feel like by using the valence-arousal model of emotions separately. This mapping enabled us to know what type of attribute means what kind of emotion for an individual. Thus now it became possible to transfer the environment into a "pleased" one and even guess how another person feels by seeing it based on one's own emotion-attirubute map.

Later we randomly gave an emotion from one of the quadrants (ie: pleased, excited, aggressive, sad) and asked them to physically make a movement that correspond to the given emotion. By tracking movement via Unity- we aimed at designing a system that would automatically track gesture and determine felt emotion. However, due to limited number of movement data there was no significant evidence to continue with motion detection. Thus we used voice-recognition during testing and communicate "pleased", "excited", "aggressive" or "sad" environments for emotional sharing between individuals.

"Calm" environment of an individual